Your Employees Are Already Using AI. Do You Know Where Your Data Is Going?

Your Employees Are Already Using AI. Do You Know Where Your Data Is Going?

Artificial intelligence is entering organizations much faster than most leadership teams expect. While executives often assume that AI adoption begins with a formal strategy, vendor selection, or a technology roadmap, the reality inside many companies looks very different. AI tools are already being used across departments by employees who are simply trying to work more efficiently.

A marketing manager may use ChatGPT to draft campaign ideas or summarize research. A finance analyst may upload spreadsheets into an AI tool to identify patterns or generate summaries. Someone in operations might use an AI platform to draft reports or analyze internal data. These actions often happen quietly and require no involvement from the IT department.

For many organizations, this creates what is increasingly described as “shadow AI.” Employees begin using AI tools independently, without formal policies, governance, or leadership visibility.

This challenge is particularly significant for mid-market organizations. Companies with $50 million to $1 billion in revenue often operate technology environments that look very similar to large enterprises, including cloud platforms, SaaS applications, remote endpoints, and complex data flows. However, they typically manage this complexity with smaller IT teams and fewer formal governance structures.

When AI tools enter this environment without oversight, the gap between enterprise-level complexity and mid-market operating models can create new security exposure.

For CEOs and CFOs, this raises a fundamental question:

Where is company data actually going when employees use AI tools?

AI Adoption Is Moving Faster Than Governance

Many organizations encourage employees to explore AI because it can improve productivity and reduce repetitive work. However, the speed at which these tools are spreading inside companies is often faster than organizations can establish policies and security controls.

Employees are already using AI tools to:

• summarize reports and documents

• generate marketing and sales content

• analyze spreadsheets and financial data

• draft internal communications

• automate repetitive operational tasks

Most AI platforms require employees to enter prompts or upload information so the system can generate results.

Without clear guidelines, employees may unintentionally upload information such as:

• internal financial projections

• customer or vendor data

• confidential strategy presentations

• legal or operational documents

Once that information is entered into an external AI platform, organizations may have limited visibility into how that data is processed, stored, or reused.

A Simple Question Many Employees Cannot Answer

A recent discussion with an executive team highlighted how quickly this situation can develop.

An employee had uploaded several internal documents into an AI tool in order to summarize the content and generate recommendations. The employee was simply trying to complete work more efficiently, and the action itself appeared reasonable.

However, when the employee was asked a simple follow-up question, the response revealed a deeper issue.

The question was:

“Was that an internal AI system or a public AI tool?”

The employee could not answer.

The individual did not know whether the AI system was running inside the company’s environment or whether the documents had been transmitted to an external platform.

This situation illustrates an awareness gap that many organizations now face. Employees are adopting AI tools rapidly, but they often do not understand the difference between internal AI systems, enterprise-approved AI platforms, and publicly available AI tools on the internet.

Without that awareness, employees may unintentionally share sensitive information with systems operating outside the organization’s security controls.

This is not simply a technology problem. It is a governance and visibility problem.

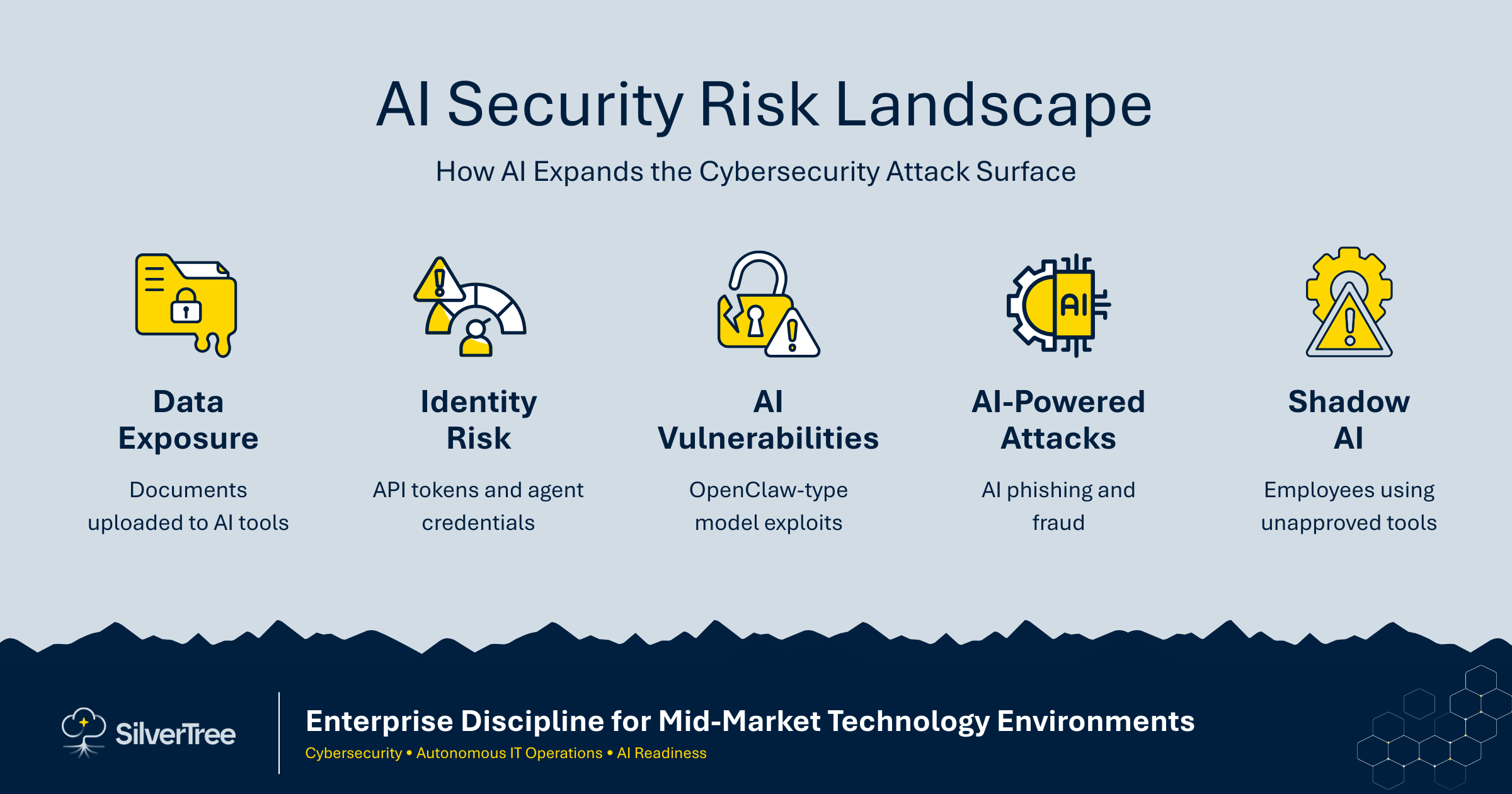

AI Is Expanding the Cybersecurity Risk Landscape

Artificial intelligence is also creating new challenges for cybersecurity teams. Security researchers are already identifying vulnerabilities in how AI systems process information, respond to prompts, and connect with enterprise environments.

One example that received attention in the security community was the OpenClaw vulnerability, which highlighted weaknesses in how certain AI models process external inputs and interact with connected systems. While issues like this are still evolving, they demonstrate that AI platforms can introduce new attack vectors if they are deployed without careful oversight.

Cyber attackers are also beginning to use AI themselves. AI tools can generate convincing phishing messages, automate social engineering campaigns, and scale attacks far more efficiently than traditional methods.

Organizations are therefore facing a new cybersecurity environment in which:

• employees are using AI tools internally

• attackers are using AI to improve the scale and sophistication of their attacks

• vulnerabilities are emerging in AI systems themselves

For mid-market organizations with smaller security teams, managing these risks can be particularly challenging.

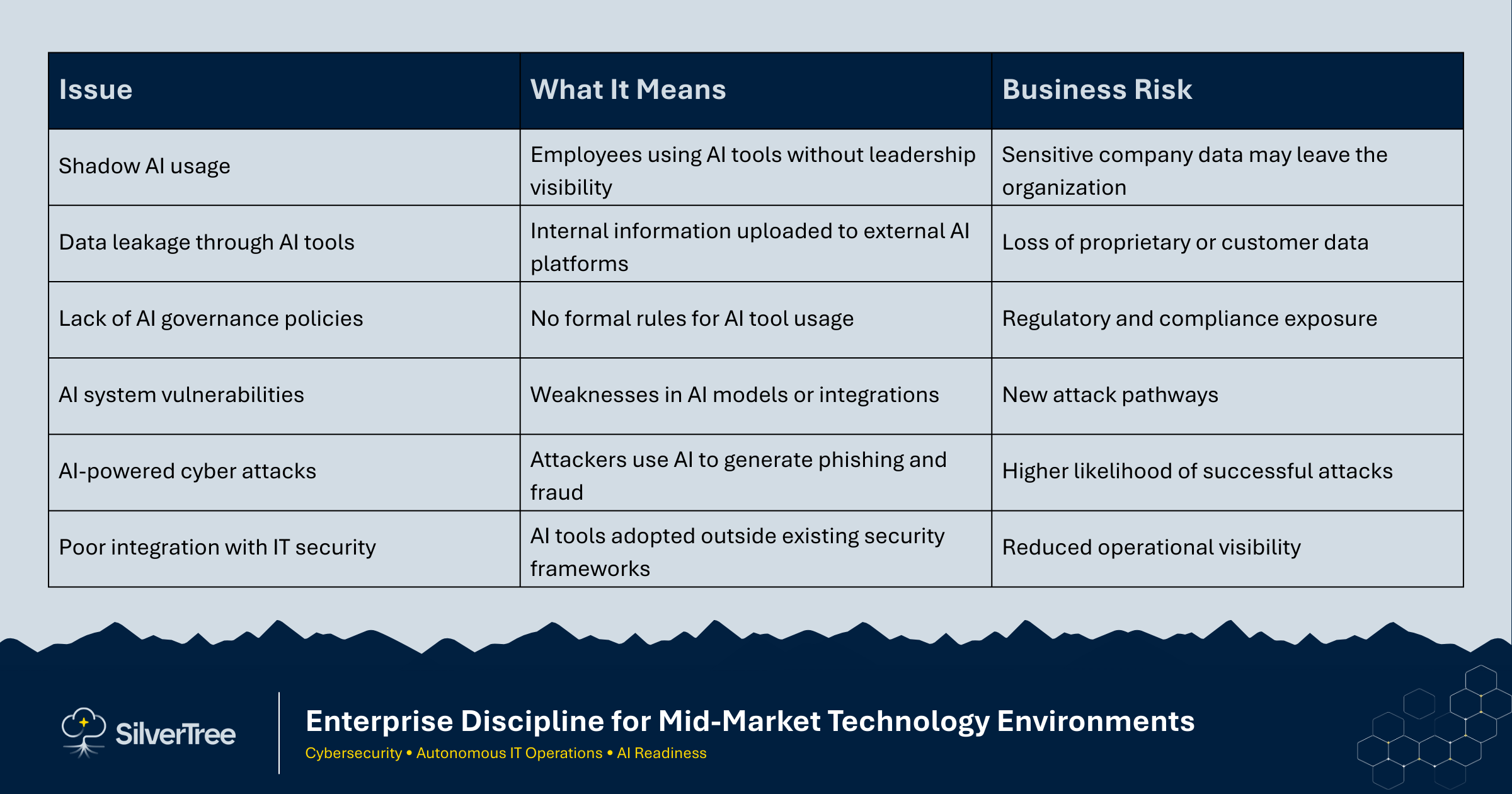

Common AI and Security Challenges

As organizations begin experimenting with AI technologies, several common challenges are emerging.

These issues are already appearing across industries as organizations experiment with AI tools without a clear operating model

Questions Leadership Should Be Asking

AI adoption is no longer just a technology conversation. It is becoming a leadership and governance issue that affects risk management, data protection, and operational oversight.

Executives should begin asking several practical questions:

• What AI tools are employees currently using?

• What company data is being entered into those systems?

• Do we have policies governing AI usage?

• Have we put in place the guardrails and required governance for AI usage?

• Are we reviewing the security posture of AI platforms before adoption?

• Do we understand how AI tools interact with internal systems and data?

In many organizations, these questions reveal that AI adoption has already expanded beyond what leadership expected.

Preparing the Organization for Responsible AI Adoption

Organizations that are successfully adopting AI tend to take a structured approach rather than allowing uncoordinated experimentation. Instead of focusing only on tools, they evaluate whether their environment is prepared to support AI safely.

This preparation usually focuses on four areas.

Use case value

Organizations that have taken the time to fully understand the use case(s), the value proportion of the use case(s) and the way to measure performance and value realization have a significantly higher rate of success leveraging AI.

Data readiness

Organizations must understand where their data resides, how it is governed, and which data sets can safely interact with AI systems.

Security posture

Companies must evaluate how AI platforms interact with internal systems and ensure that sensitive information remains protected.

Operational discipline

Clear policies, monitoring, and governance frameworks help ensure that AI adoption occurs in a controlled and responsible way.

For mid-market organizations, this discipline is particularly important because AI adoption often occurs faster than governance structures can adapt.

AI Governance Is Now a Leadership Responsibility

Artificial intelligence is quickly becoming part of everyday work across finance, marketing, operations, and customer service. In many organizations, employees have already begun using AI tools to improve productivity and efficiency.

The challenge for leadership is not whether AI will be used inside the organization.

In most cases, it already is.

The more important question is whether the organization has visibility into how these tools are being used and where company data may be flowing.

For mid-market CEOs and CFOs, this challenge is especially important because their organizations often operate enterprise-level technology environments with smaller teams and fewer layers of governance.

Organizations that bring enterprise discipline to AI governance will be far better positioned to capture the benefits of AI while protecting the information and systems that matter most.

Evaluate Your Organization’s AI Readiness

Employees are already using AI tools. The question is whether your organization has the governance, data controls, and security foundations required to use them safely.

Silver Tree’s AI Readiness Assessment identifies exposure risks and the operational controls required for responsible AI adoption.

GET AN ASSESSMENT: https://www.silvertreeservices.com/assessments